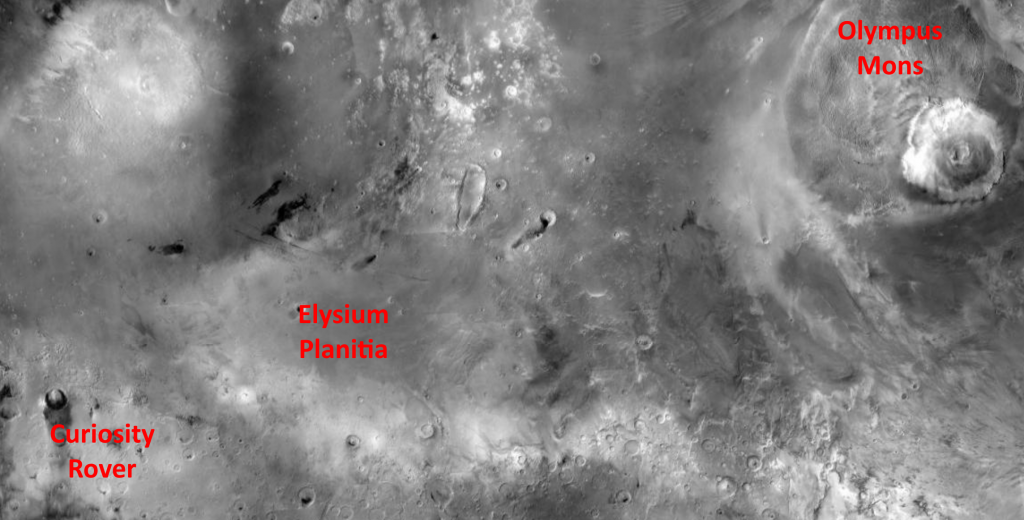

I thought I’d take this opportunity to share out a recent picture returned by NASA’s Curiosity Rover of its environment. Indeed, although it shows as a single picture, it was in fact assembled from over a thousand separate images, carefully aligned each with the next in order to give a composite panorama.

There’s also a YouTube video exploring this in more detail and highlighting particular features – the link is below.

Now, as well as the intrinsic interest of this picture, it has also been fun for me to locate it in relation to some of the Martian locations used in my novel Timing, part of which is set on Mars.

Olympus Mons is the largest mountain on Mars – the second highest that we know of in the entire solar system. In Timing, the main characters Mitnash Thakur and his AI persona assistant Slate first investigate a finance training school close to Olympus Mons, and subsequently visit a gambling house in a settlement on Elysium Planitia. Curiosity, and the panorama picture, is right at the edge of Elysium Planitia. So the terrain in that part of Timing would be not unlike the Curiosity picture.

The following extract from Timing is when Mitnash and Slate arrive on Mars, having taken a shuttle down from the moon Phobos. But before that, here’s the link to the video I mentioned (https://youtu.be/X2UaFuJsqxk).

Extract from Timing

The shuttle had peaked in its hop, and was now descending again. The pilot had flicked the forward view up on one of the screens, so we could all enjoy the sight of the second tallest mountain in the solar system. All twenty-two kilometres of it. We were already below the level of the summit. Gordii Fossae, where the training college was located, was behind the right flank from this angle, and I didn’t expect to see it.

Before long we were grounding at the dock. I stood up, and was treated to some curious looks from the remaining passengers. I was the only one alighting here. At a guess, it was not a popular stop – those hardy souls who wanted to bag the summit of Olympus would normally take a different route altogether, first to Lycus Sulci basecamp, then up and over the scarp before trekking to the peak.

I went through into the reception hall with the minimum of bureaucratic fuss, targeted on all sides by glossy ads inviting me to sample the pleasures of Martian laissez faire. Then after the last gate, the narrow entrance tunnel opened into a wider dome, and there was a heavy-set man with dark hair waiting, looking slightly bored. Seeing me, he stepped forward and held out a hand.

“Mitnash Thakur? I’m Teemu Kalas. Welcome to Gordii Fossae.”

Teemu insisted on taking my bag, though it was hardly a burden, and we chatted idly as he ushered me through several linked domes to a long hall. He had a heavy, northern European accent. I couldn’t decide if he made everything sound very serious, or a complete joke. Slate whispered to me that he was one of the two vice-principals of the training centre. He opened a locker, pulled out two suits and passed one to me, gesturing to the airlock nearby.

“Here you are, Mitnash. We got your size from your persona. Slate, she’s called, isn’t she? Now, be warned that it’s not a perfect fit. Should be close enough though. And it’s the nearest we had, anyway. I’ll signal the truck to attach to the lock by concertina, but we always wear suits on the journey. Protocol, you know.”

“How far is the school from here?”

“About ten kilometres to the main teaching block. A little bit further to the dormitory entrance where I’ll be taking you. A lot can happen on a journey like that.”

He glanced to see how I was fastening the suit and seemed satisfied. We left the lids open, but ready to snap down if need be. Then we cycled through the lock and into a vehicle. At a guess, it was about the size of a small bus. The engine was already filling the cabin with an electric hum, and after a couple of checks he tapped a toggle and leaned back.

The windows looked out on a set of very large tyres on either side, but beyond that, the Martian landscape stretched away to the horizon, drab and dusty, with jagged blackish bands of rock emerging from the sand at intervals. On our right, the slopes of Olympus Mons stretched hugely up into the pale sky. At this point, the scarp which was so prominent around most of the northern rim dipped down, to merge smoothly into the surrounding terrain as you continued on south. It still looked fearsome just here.

“There. The onboard system will get us the rest of the way. It’s twenty minutes from here. I’ve got the centre Sarsen twins supervising it just in case, but it’s hardly a new journey. Now, you’ll have a lot of questions, but Mikko – that’s Mikko Pulkkinen, the principal – said to wait for all that until he meets you tomorrow. The rest of today is yours, to get acclimatised. You’ll get more tired than you expect. Been on Phobos long?”