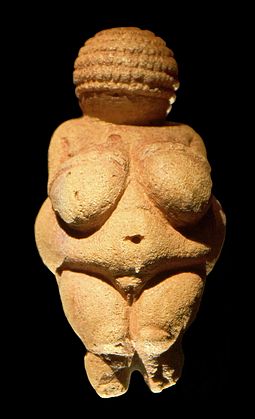

I thought it was long overdue time that I wrote something on poetry – my historical fiction books lean heavily on poetry, and my various science fiction and fantasy books are regularly built around music and singing – something I reckon will forever be a part of human experience, wherever we end up living. Music has transformed itself many times over since our prehistoric forebears first accompanied their own voices on wind, string or percussion instruments. We have listened to and participated in music played solo or in groups, small and large.

But today I am writing about poetry, not music, though the two are very closely related – probably the topic of another blog sometime. Six of the nine Greek muses were explicitly involved with music and poetry, and the focus of the other three was on pursuits which depended heavily on them. In the myths, the muses were not just engaged in fun and celebration – they also turn up to defend their reputation and avenge themselves on mortals who presume to challenge their primacy.

When most people in the modern world think of poetry, we typically imagine lines of regular beats with some sort of rhyme scheme – either adjacent lines rhyming in an AA-BB pattern, or alternating lines sounding like AB-AB, or the looser version AB-CB. For example, the American anthem, The Star-Spangled Banner, uses ABAB for the first four lines of each stanza, and AA-BB for the last four. At the casual end of the scale, Mary had a Little Lamb uses AB-CB. We all know that “real” poetry does not always adhere to these basic patterns, but if asked to come up with a rhyme on the spur of the moment, these basic schemes will probably come to mind.

Most of the earliest poetry that we have, however, is not built around rhyme, nor indeed around a regular pulse or metre. Instead, early poetry from Mesopotamia and Egypt, followed later all around the ancient near east and so also appearing in the Hebrew Bible, was built around the idea of parallelism. (Ages ago I wrote a post about how this pattern also turns up in the much more recent Finnish epic Kalevala) Pairs of lines expressed the same idea in different ways, without special regard for the exact number of syllables or metrical beats, or any rhyming pattern. Something like the start of the Ugaritic epic poem of king Keret:

The clan of Keret died out;

the house of the king was destroyed

Now the advantage of parallelism, from the point of view of other people trying to understand it, is that it is comparatively easy to translate. There will almost certainly be subtleties of the language, word plays and the like, which don’t translate, but the basics certainly do. But poets rapidly wanted to make their work richer and more complex. So variations of parallelism arose – words omitted or added in the basic couplets, changes of word order to invert the second line, triplet forms extending the basic pairs, and so on. The parallelism of words was enhanced by using alliteration of consonants to reinforce the connecting sounds.

http://www.ancientmusicireland.com)

So the stage was set for end-rhyme to make its appearance in poetry – the pattern that we are most used to today. You can look at end-rhyme as just another form of parallelism – but instead of the line endings being signalled by words with parallel meaning, something opposite is happening. The correspondence of rhyming words at the line ends makes us put them in parallel, and so establishes links between words which otherwise would remain separate in our minds. The more appropriately creative the rhyme, the more striking becomes the connection between words in our minds. William Blake’s Tyger has the following lines, provoking us to make connections between spears and tears…

When the stars threw down their spears

And water’d heaven with their tears

And again, poets play with our expectations of rhyme in order to jolt us into a different interpretation. Sometimes called a “censored rhyme”, it is often used to suggest politically subversive or sexually risque themes – the actual words themselves are typically innocent, but the expectation aroused in the listener is not. My favourite example is Sweet Violets… almost every line sets the listener up to expect a particular rhyming word, and then diverges away…

There once was a farmer who took a young miss

In back of the barn where he gave her a lecture

On horses and chickens and eggs

And told her that she had such beautiful manners

That suited a girl of her charms

A girl that he wanted to take in his

Washing and ironing and then if she did

They could get married and raise lots of

Sweet violets

Sweeter than all the roses…

:format(jpeg):mode_rgb():quality(40)/discogs-images/R-1039597-1196004245.jpeg.jpg)

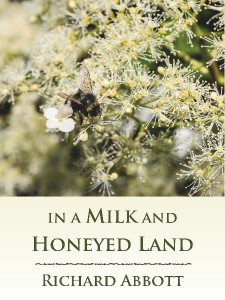

This all has a lot to do with writing. Some authors want to include real poems in their books, as opposed to saying something along the lines of “then they sang a song”. So then you have to decide how your poem is to be structured in a formal sense, and whether you want that to mirror the conventions of the time of the setting. So a book set in the ancient near east – if it is to be authentic to its era – would not use rhyming couplets, but parallel ones. A story set in Anglo-Saxon times would use the conventions of Germanic poetry, built heavily around word alliteration and stock verbal images with little if any rhyme. A fantasy or science fiction book is free to build up its own conventions as to how poetry in that world is created – but would be enriched by making those fictional conventions fully integrated into the wider world-building . It’s a habit of thought that Tolkien was a master at – he had the advantage of being able to draw on a wide variety of early conventions of song and poetry, and he deployed these conventions so carefully that you can tell almost at first read of one of his poems, which of the various peoples of Middle Earth are in focus (see the Open Culture web site for some readings)

To close, here’s a video of ancient Irish music, found at http://www.ancientmusicireland.com. A wealth of information and live demonstrations, with (to my ears) odd resonances in the music of Bladerunner…